Centralized AI Agent Governance for the Enterprise

AI agent governance with audit trails, governance dashboards, and regulatory alignment. Ensure every AI decision is traceable, accountable, and compliant.

Deliver Accountable AI

Every agent decision is recorded with full context and traceability. Teams can reproduce, explain, and justify any AI action for internal review or regulatory audit.

Prevent Compliance Violations

Pre-configured compliance packs and runtime policy enforcement catch violations before they become incidents — reducing fines, penalties, and reputational risk.

Executive Visibility

Governance dashboards connect AI behavior to business outcomes. Leadership gets a unified view of compliance status, incident trends, and operational health.

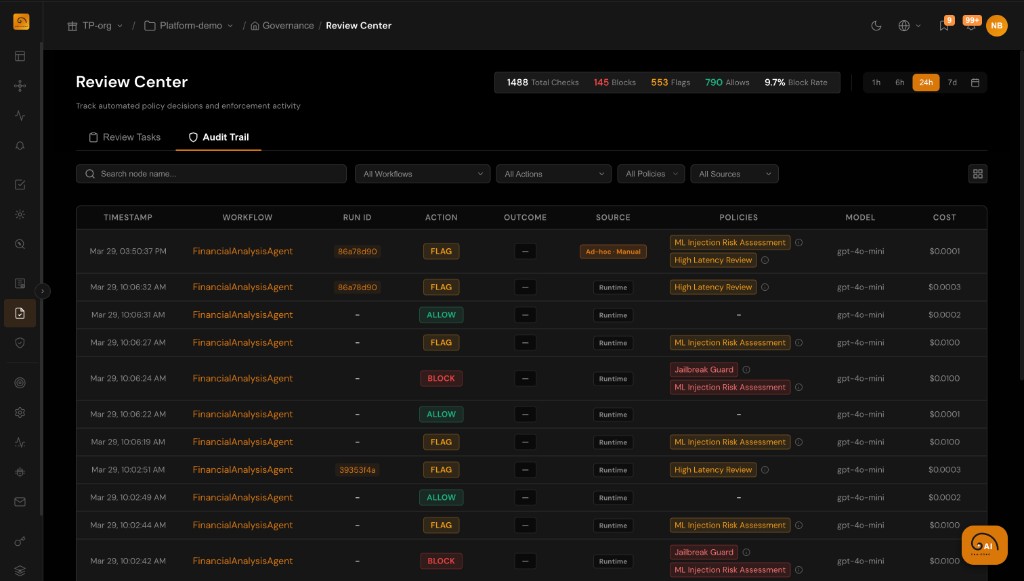

Complete Traceability for Every Decision

Every agent action is captured with full execution context — creating an immutable record that satisfies internal governance and external regulatory requirements.

- Timestamped record of every decision, action, evaluation, and policy outcome

- Full execution context: inputs, reasoning, tool calls, outputs, and scores

- Immutable audit log that cannot be retroactively modified

- Filterable audit records for compliance evidence and regulatory review

Meet Regulatory Requirements with Confidence

Pre-configured compliance packs translate regulatory requirements into enforceable policies. TuringPulse generates the evidence needed for audits automatically.

- Compliance packs for HIPAA, GDPR, SOC 2, and configurable custom frameworks

- Automatic evidence generation aligned with regulatory review requirements

- Policy enforcement tied to compliance rules — violations are caught at runtime

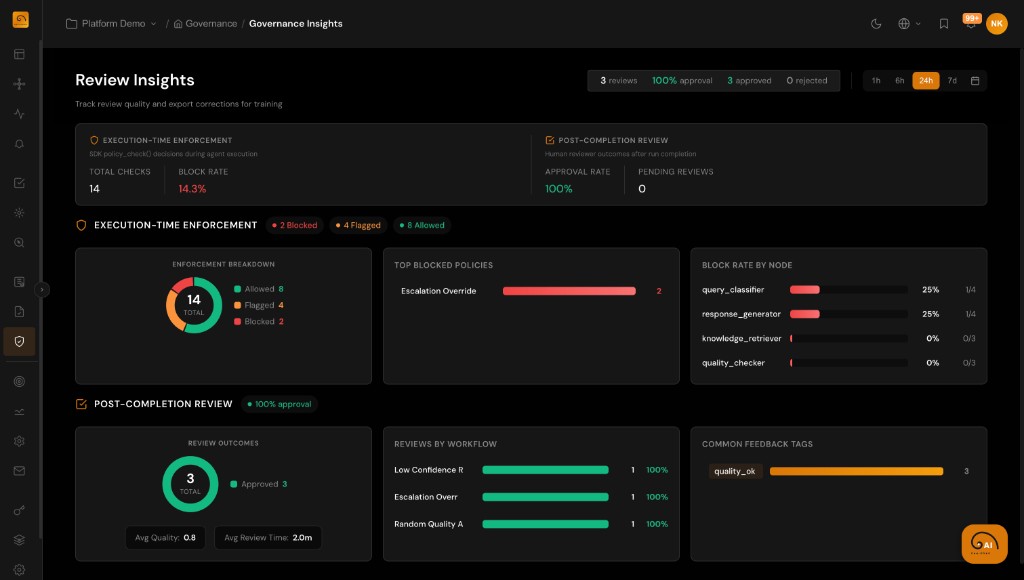

- Review insights surface compliance trends, violations, and resolution status

Executive Dashboards for AI Operations

Give leadership a unified view of AI performance, compliance status, and operational health across all teams and applications.

- Connect agent behavior and performance to business KPIs

- Track ownership, responsibilities, and approvals across applications

- Monitor incident trends, resolution times, and compliance health

- Role-based access ensures the right stakeholders see the right data